Model monitoring overview#

Note

This is currently a beta feature.

In this section

Architecture#

The model monitoring process flow starts with collecting operational data. The operational data are converted to vectors, which are posted to the Model Server.

The model server is then wrapped around a machine learning model that uses a function to calculate predictions based on the available vectors.

Next, the model server creates a log for the input and output of the vectors, and the entries are written to the production data stream (a v3io stream).

While the model server is processing the vectors, a Nuclio operation monitors the log of the data stream and is triggered when a new log entry is detected.

The Nuclio function examines the log entry, processes it into statistics which are then written to the statistics databases (parquet file, time series database and key value database).

The parquet files are written as a feature set under the model monitoring project. The parquet files can be read either using pandas.read_parquet or feature_set.get_offline_features, like any other feature set.

In parallel, a scheduled MLRun job runs reading the parquet files, performing drift analysis. The drift analysis data is stored so that the user can retrieve it in the Iguazio UI or in a Grafana dashboard.

Drift analysis#

The model monitoring feature provides drift analysis monitoring. Model drift in machine learning is a situation where the statistical properties of the target variable (what the model is trying to predict) change over time. In other words, the production data has changed significantly over the course of time and no longer matches the input data used to train the model. So, for this new data, accuracy of the model predictions is low. Drift analysis statistics are computed once an hour. For more information see Concept Drift.

Common terminology#

The following terms are used in all the model monitoring pages:

Total Variation Distance (TVD) — The statistical difference between the actual predictions and the model's trained predictions.

Hellinger Distance — A type of f-divergence that quantifies the similarity between the actual predictions, and the model's trained predictions.

Kullback–Leibler Divergence (KLD) — The measure of how the probability distribution of actual predictions is different from the second model's trained reference probability distribution.

Model Endpoint — A combination of a model and a runtime function that can be a deployed Nuclio function or a job runtime. One function can run multiple endpoints; however, statistics are saved per endpoint.

See also

Model monitoring using the Iguazio platform interface#

Iguazio's model monitoring data is available for viewing through the regular platform interface. The platform provides four information pages with model monitoring data.

Select a project from the project tiles screen.

From the project dashboard, press the Models tile to view the models currently deployed.

Press Model Endpoints from the menu to display a list of monitored endpoints.

If the Model Monitoring feature is not enabled, the endpoints list is empty.

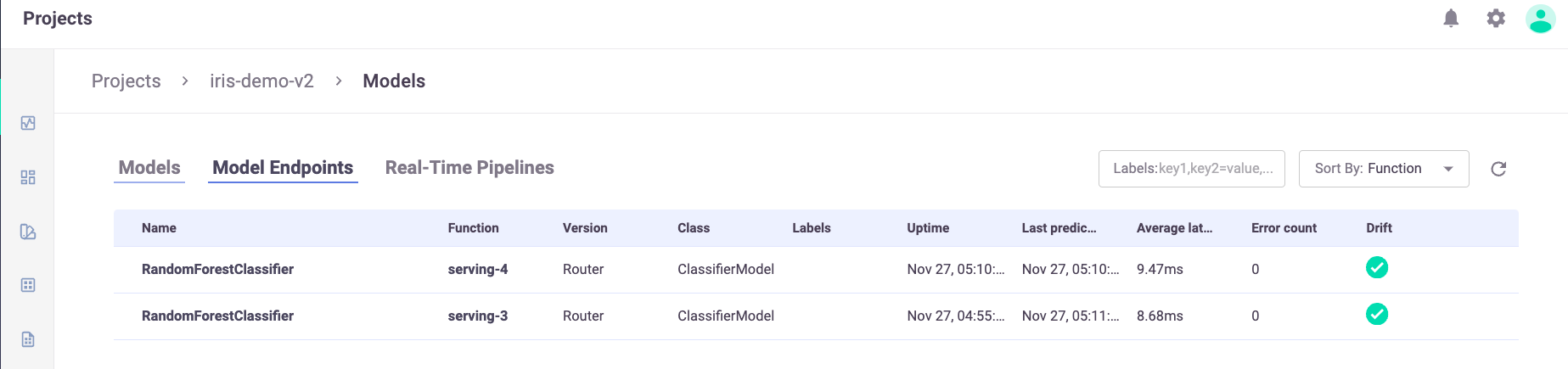

Model endpoint summary list#

The Model Endpoints summary list provides a quick view of the model monitoring data.

The summary page contains the following fields:

Name — the name of the model endpoint

Function — the name of the related function

Version — user configured version taken from model deployment

Class — the implementation class that is used by the endpoint

Labels — user configurable tags that are searchable

Uptime — first request for production data

Last Prediction — most recent request for production data

Average Latency — Average latency time of serving a single event in the last hour

Error Count — includes prediction process errors such as operational issues (For example, a function in a failed state), as well as data processing errors (For example, invalid timestamps, request ids, type mismatches etc.)

Drift — indication of drift status (no drift (green), possible drift (yellow), drift detected (red))

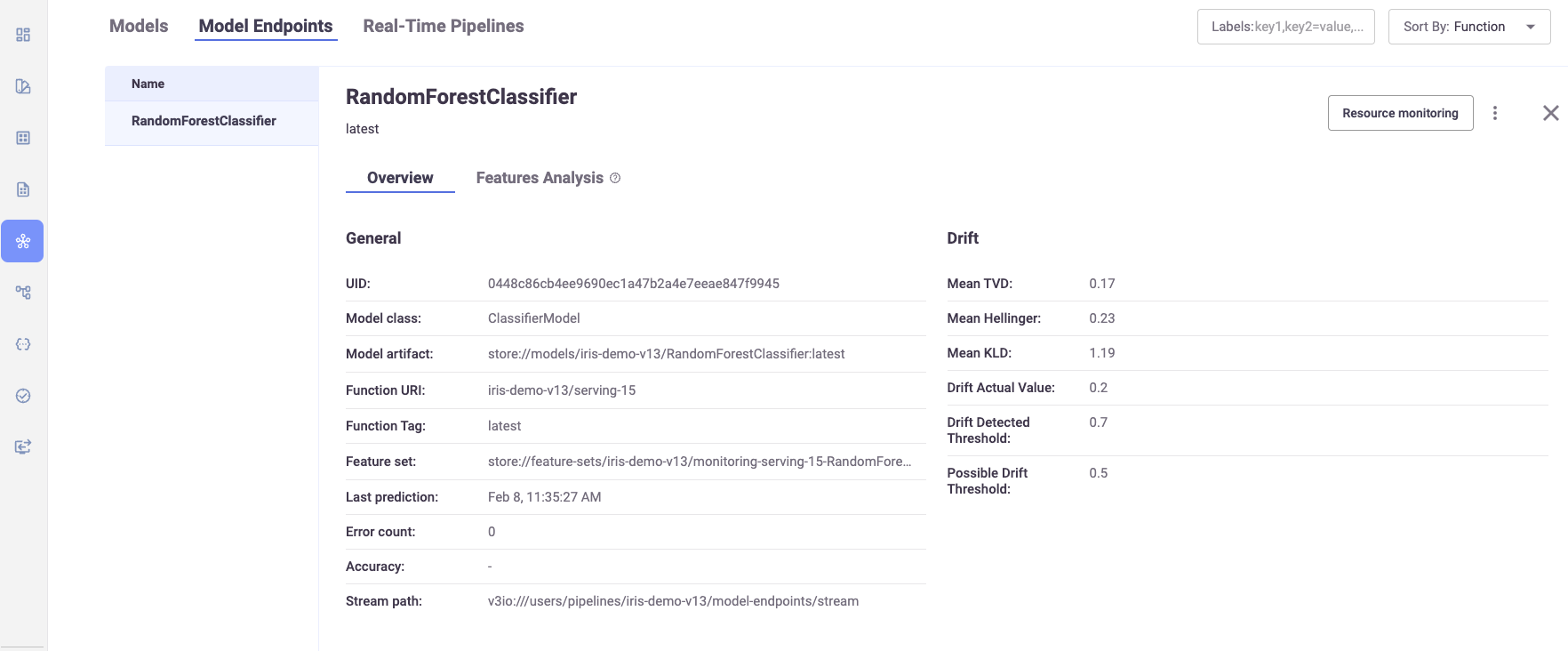

Model endpoint overview#

The Model Endpoints overview pane displays general information about the selected model.

The Overview page contains the following fields:

UUID — the ID of the deployed model

Model Class — the implementation class that is used by the endpoint

Model Artifact — reference to the model's file location

Function URI — the MLRun function to access the model

Function Tag — the MLRun function tag

Feature set — the monitoring feature set that points to the monitoring parquet directory

Last Prediction — most recent request for production data

Error Count — includes prediction process errors such as operational issues (For example, a function in a failed state), as well as data processing errors (For example, invalid timestamps, request ids, type mismatches etc.)

Accuracy — a numeric value representing the accuracy of model predictions (N/A)

Stream path — the input and output stream of the selected model

Mean TVD — the mean value of the Total Variance Distance of the model features and labels

Mean Hellinger — the mean value of the Hellinger Distance of the model features and labels

Mean KLD — the mean value of the KL Divergence of the model features and labels

Drift Actual Value — the resulted drift value of the latest drift analysis calculation.

Drift Detected Threshold — pre-defined value to determine a drift

Possible Drift Threshold — pre-defined value to determine a possible drift

Note

Click on Resource monitoring to get the relevant Grafana Details Dashboard that displays real-time performance data of the selected model in detail. In addition, use the ellipsis to view the YAML resource file for details about the monitored resource.

Model features analysis#

The Features Analysis pane provides details of the drift analysis in a table format with each feature and label in the selected model on its own line.

Each field has a pair of columns. The Expected column displays the results from the model training phase, and the Actual column displays the results from the live production data. The following fields are available:

Mean

STD (Standard deviation)

Min

Max

TVD

Hellinger

KLD

Histograms—the approximate representation of the distribution of the data. Hover over the bars in the graph for the details.

Model monitoring using Grafana dashboards#

You can deploy a Grafana service in your Iguazio instance and use Grafana Dashboards to view model monitoring details. There are three dashboards available:

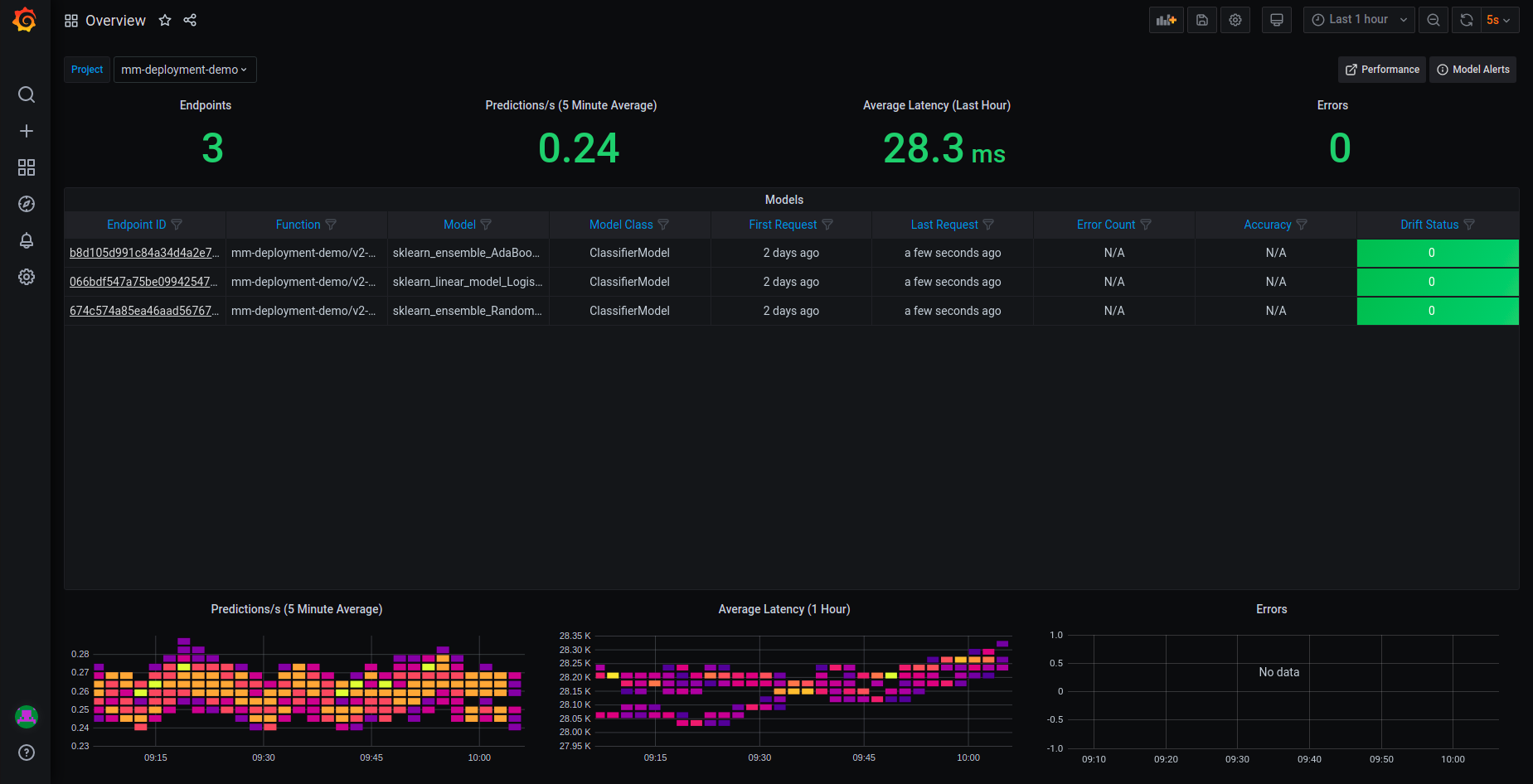

Model Monitoring Overview dashboard#

The Overview dashboard displays the model endpoint IDs of a specific project. Only deployed models with Model Monitoring enabled are displayed. Endpoint IDs are URIs used to provide access to performance data and drift detection statistics of a deployed model.

The Overview pane provides details about the performance of all the deployed and monitored models within a project. You can change projects by choosing a new project from the Project dropdown. The Overview dashboard displays the number of endpoints in the project, the average predictions per second (using a 5-minute rolling average), the average latency (using a 1-hour rolling average), and the total error count in the project.

Additional details include:

Endpoint ID — the ID of the deployed model. Use this link to drill down to the model performance and details panes.

Function — the MLRun function to access the model

Model — user defined name for the model

Model Class — the implementation class that is used by the endpoint

First Request — first request for production data

Last Request — most recent request for production data

Error Count — includes prediction process errors such as operational issues (for example, a function in a failed state), as well as data processing errors (for example, invalid timestamps, request ids, type mismatches etc.)

Drift Status — no drift (green), possible drift (yellow), drift detected (red)

At the bottom of the dashboard are heat maps for the Predictions per second (5 minute average), Average Latency (one hour average), and Errors. See How to Read a Heat Map for more details.

Click an endpoint ID to drill down to the performance details of that model.

How to read a heat map#

Heat maps are used to analyze trends and to instantly transform and enhance data through visualizations. This helps to quickly identify areas of interest, and empower users to explore the data in order to pinpoint where there may be potential issues. A heat map uses a matrix layout with colour and shading to show the relationship between two categories of values (x and y axes), so the darker the cell, the higher the value. The values presented along each axis correspond to a cell which is color-coded to represent the relationship between the two categories. The Predictions per second heatmap shows the relationship between time, and the predictions per second, and the Average Latency per hour shows the relationship between time and the latency.

To properly read the heap maps, follow the hierarchy of shades from the darkest (the highest values) to the lightest shades (the lowest values).

Note

The exact quantitative values represented by the colors may be difficult to determine. Use the Performance Dashboard to see detailed results.

Model Monitoring Details dashboard#

The model monitoring details dashboard displays the real-time performance data of the selected model in detail. Model performance data provided is rich and is used to fine tune or diagnose potential performance issues that may affect business goals. The data in this dashboard changes based on the selection of the project and model.

This dashboard panes are:

Project and model summary#

Use the dropdown to change the project and model. Results are based on the last run only. The dashboard presents the following information about the project:

Endpoint ID — the ID of the deployed model

Model — user defined name for the model

Function URI — the MLRun function to access the model

Model Class — the implementation class that is used by the endpoint

Prediction/s — the average number of predictions per second over a rolling 5-minute period

Average Latency — the average latency over a rolling 1-hour period

First Request — first request for production data

Last Request — most recent request for production data

Overall Drift Analysis#

This pane has two sections: Overall Drift Analysis and Features Analysis. Results are based on the last run only. The Overall Drift Analysis pane provides performance statistics for the currently selected model.

TVD (sum and mean)

Hellinger (sum and mean)

KLD (sum and mean)

Features Analysis#

The Features Analysis pane provides details of the drift analysis for each feature in the selected model. Results are based on the last run only. This pane includes five types of statistics:

Actual (min, mean and max) — results based on actual live data stream

Expected (min, mean and max) — results based on training data

TVD

Hellinger

KLD

Incoming features graph#

This graph displays the performance of the features that are in the selected model based on sampled data points from actual feature production data. The graph displays the values of the features in the model over time.

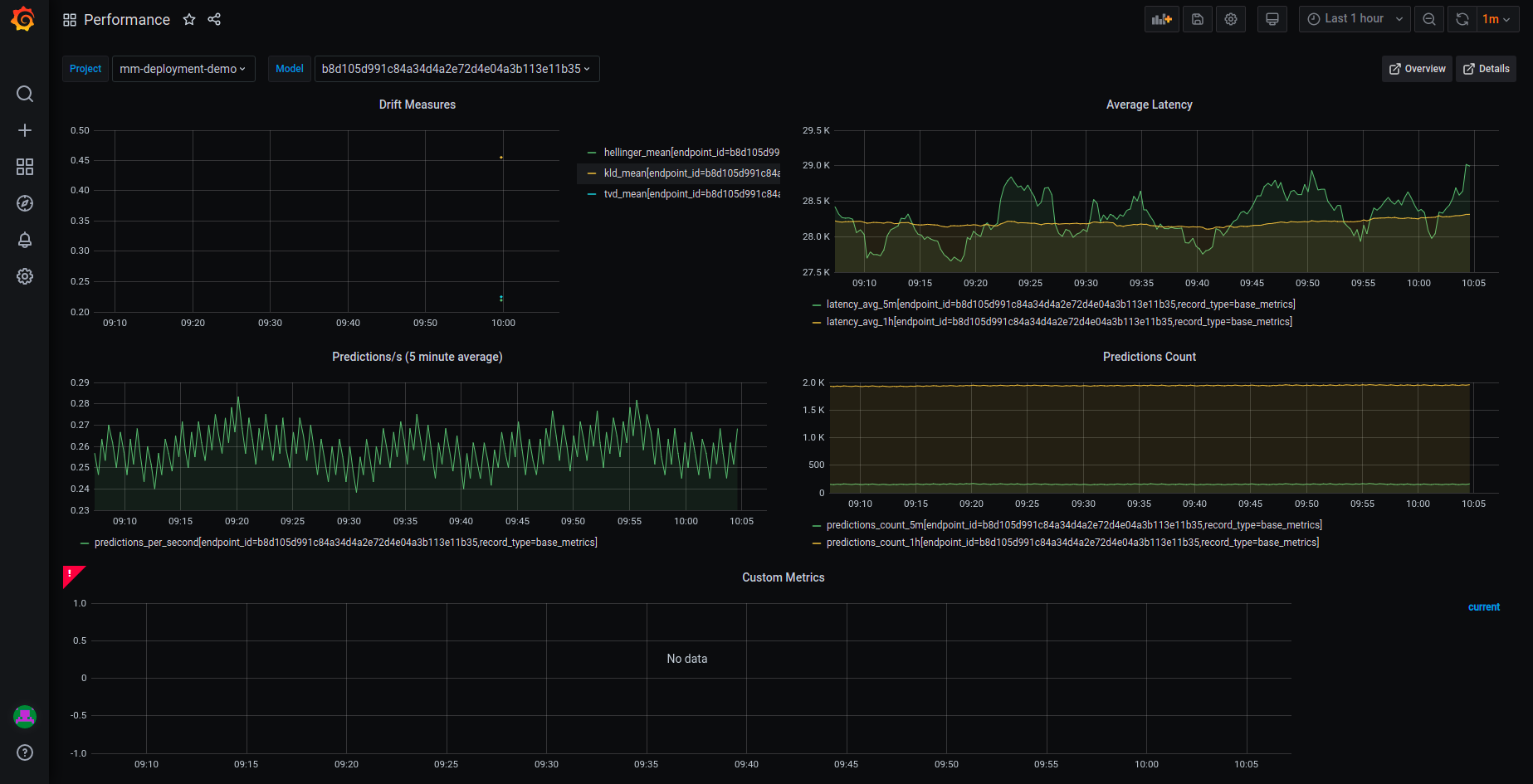

Model Monitoring Performance dashboard#

Model endpoint performance displays performance details in graphical format.

This dashboard has five graphs:

Drift Measures — the overall drift over time for each of the endpoints in the selected model

Average Latency — the average latency of the model in 5 minute intervals, for 5 minutes and 1 hour rolling windows

Predictions/s — the model predictions per second displayed in 5 second intervals for 5 minutes (rolling)

Predictions Count — the number of predictions the model makes for 5 minutes and 1 hour rolling windows

Custom Metrics — user-defined custom metrics

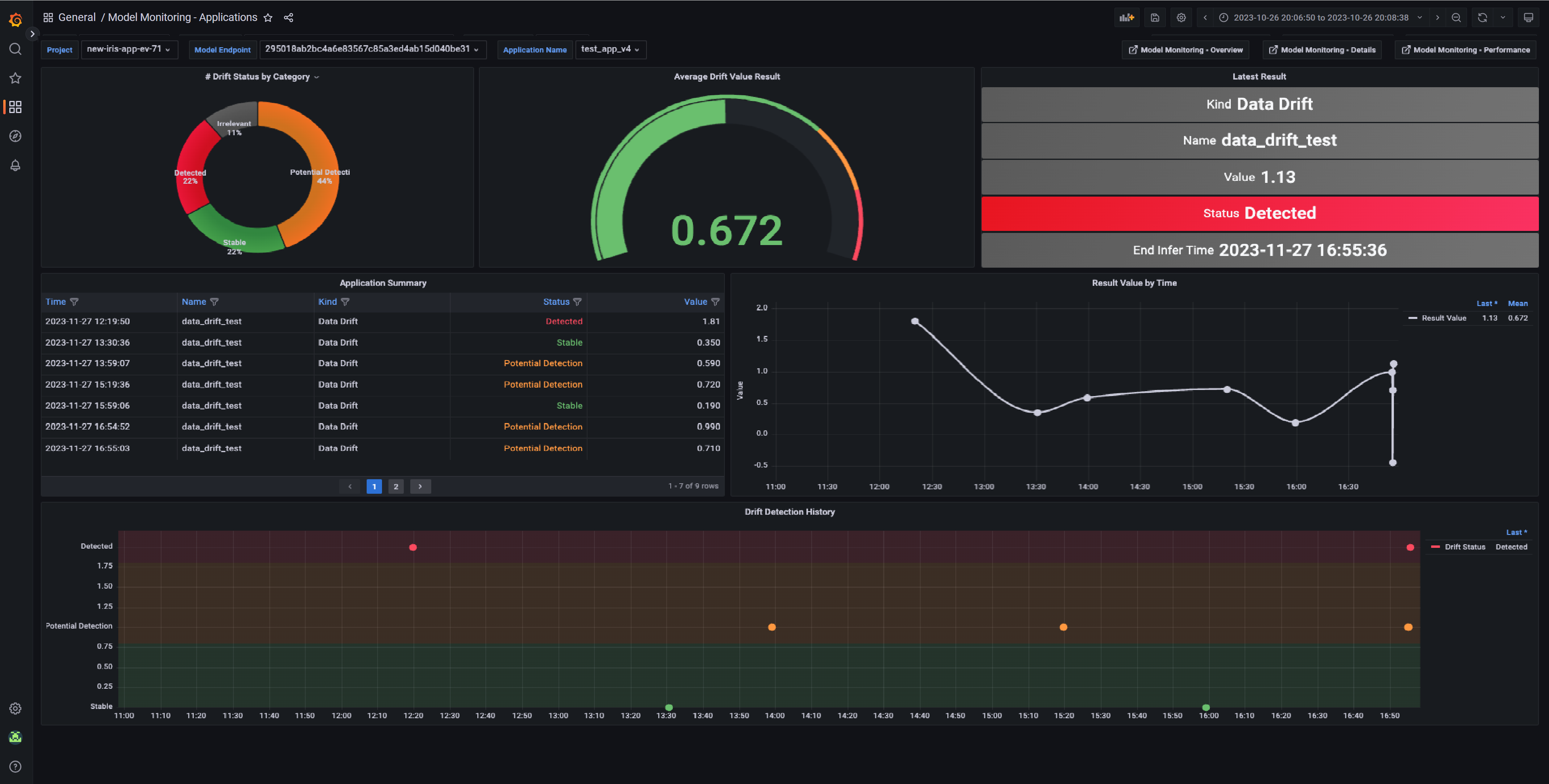

Model Monitoring Applications dashboard#

Model monitoring of deployed applications displays drift details in graphical format.

Draft Status by Category — the percentages of potential drift, no drift and detected drift

Average Drift Value Result — the average of the potential and the detected drift values

Latest Result — summary of the latest draft result

Application Summary — table summary of the application results including the schedule time, the metric name, metric kind, result status, and result numerical value.

Result Value by Time — user-defined metric by time. This value is calculated based on user defined function and represents a specific numerical result of the selected application by time.

Drift Detection History — drift status by time. Using this chart you can detect different types of drifts by time such as gradual drift or incremental drift. To learn more about different types of drift, see Model monitoring. Results that do not represent drift ("irrelevant") are filtered from this chart.

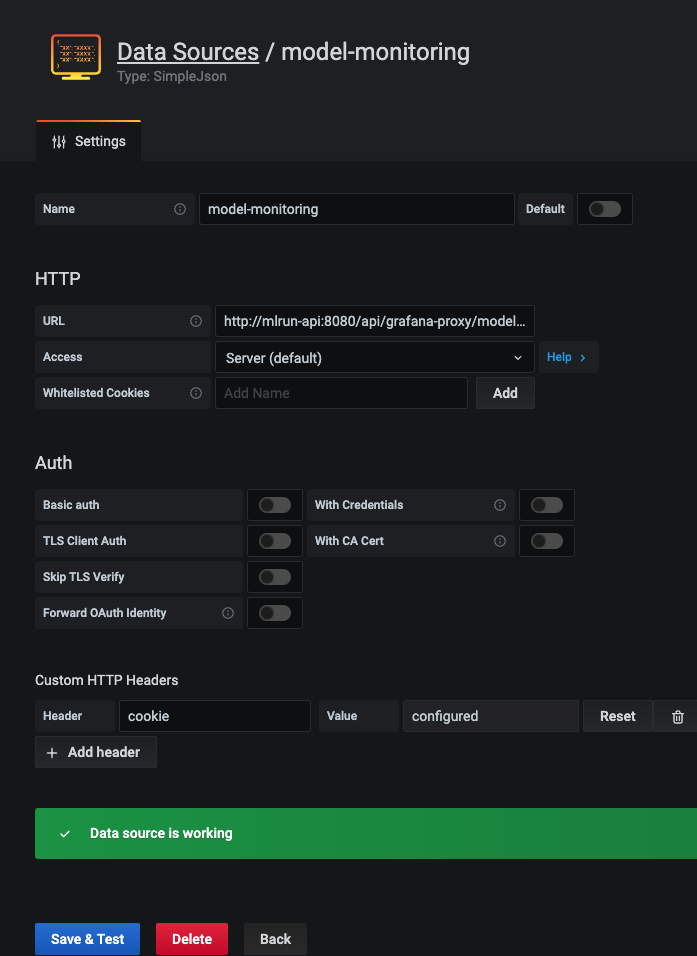

Configuring Grafana datasources#

Verify that you have a Grafana service running in your Iguazio MLOps Platform.

If you do not have a Grafana service running, please follow Creating a Service to add it to your platform.

When you create the service: In the Custom Parameters tab, Platform data-access user parameter, select a user with access to the /user/pipelines directory.

In addition, you will have to add access keys to your model-monitoring data source:

Open your Grafana service.

Navigate to Configuration | Data Sources.

Press model-monitoring.

In Custom HTTP Headers, configure the cookie parameter. Set the value of

cookieto:session=j:{"sid": "<YOUR ACCESS KEY>"}Press Save & Test for verification. You'll receive a confirmation with either a success or a failure message.

Configuring Grafana dashboards#

From Iguazio 3.5.3, the overview, details, and performance dashboards can be found under Dashboards | Manage | private. You can also import the latest dashboards versions by downloading them using the following links:

Iguazio 3.5.3 and higher

Iguazio up to and including 3.5.2

Upload dashboards to your Grafana service by:

Navigate to your Grafana service in the Services list and press it.

Press the dashboards icon in left menu.

In the Dashboard Management screen, press IMPORT, and select one file to import. Repeat this step for each dashboard.

Note

You need to train and deploy a model to see results in the dashboards. The dashboards immediately display data if you already have a model that is trained and running with production data.