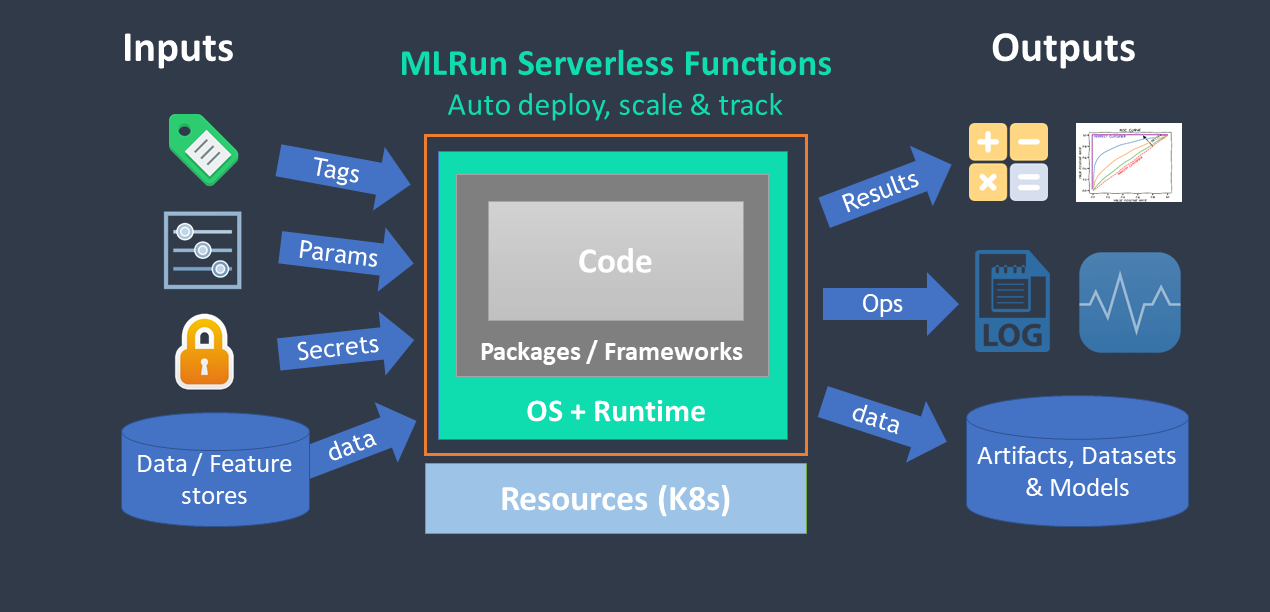

Functions architecture

Contents

Functions architecture#

MLRun supports:

Configuring the function resources (replicas, CPU/GPU/memory limits, volumes, Spot vs. On-demand nodes, pod priority, node affinity). See details in Managing job resources.

Iterative tasks for automatic and distributed execution of many tasks with variable parameters (hyperparams). See Hyperparam and iterative jobs.

Horizontal scaling of functions across multiple containers. See Distributed and Parallel Jobs.

MLRun has an open public Function Hub that stores many pre-developed functions for use in your projects.

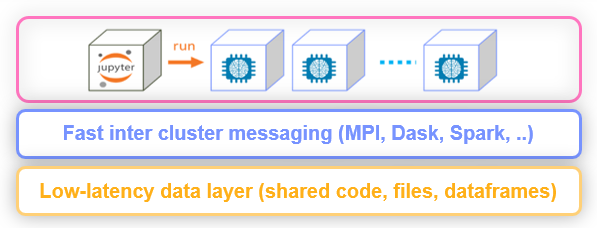

Distributed functions#

Many of the runtimes support horizontal scaling. You can specify the number of replicas or the

min—max value range (for auto scaling in Dask or Nuclio). When scaling functions, MLRun uses a high-speed

messaging protocol and shared storage (volumes, objects, databases, or streams). MLRun runtimes

handle the orchestration and monitoring of the distributed task.